We decided to take a first try hands-on approach following the future QUADS roadmap and re-architect our legacy landing/requests portal application, previously a trusty LAMP stack, into a completely rewritten Flask / SQLAlchemy / Gunicorn / Nginx next-gen platform. What worked in Dev/Stage however had serious problems in production with CPU bottlenecks – this is our journey.

Go LIVE!

After a couple of weeks of running a base stack locally and converting all the ugly PHP code to them lovely jinja2 templates, we do a stage deployment with a development environment and test all the possible scenarios that our users might come up with. Everything is looking and feeling great so we decide it’s time for the Go LIVE!

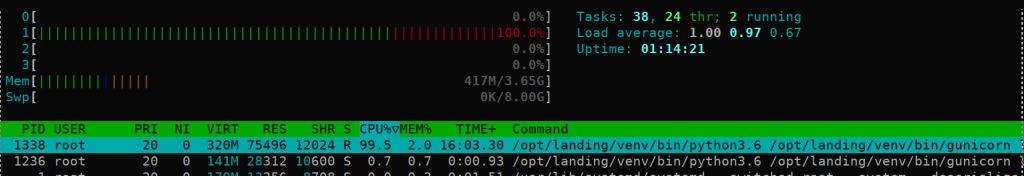

We prepare the production server, we clone the repository and setup up all the environment variables, only this time with those settings ready for production. We advertise the new site to our users and people start coming in and doing their business as usual. At this point some might have experienced a bit of a delay when loading the page but nothing serious since we heard no complains. After a couple of days and some more heavy lifting usage we notice a particular slow down on the response time so we go into the server and run htop against it and this is what comes up:

At this point it’s clear to us there is a serious and non-intuitive performance issue with gunicorn, specially when the Gunicorn Gevent documentation states that each worker has it’s own event loop where the worker can handle thousands of requests per second and we were still around probably less than 1% that load limit.

Diagnosing

We got our first diagnose step for free by running htop before. How do we keep digging on this issue?

First call is to run strace against the main gunicorn process:

-=>>strace -p 1236

strace: Process 1236 attached

select(6, [5], [], [], {tv_sec=0, tv_usec=382278}) = 0 (Timeout)

fstat(8, {st_mode=S_IFREG|001, st_size=0, ...}) = 0

fstat(9, {st_mode=S_IFREG|000, st_size=0, ...}) = 0

fstat(10, {st_mode=S_IFREG|000, st_size=0, ...}) = 0

fstat(11, {st_mode=S_IFREG|000, st_size=0, ...}) = 0

select(6, [5], [], [], {tv_sec=1, tv_usec=0}) = 0 (Timeout)

fstat(8, {st_mode=S_IFREG|000, st_size=0, ...}) = 0

fstat(9, {st_mode=S_IFREG|001, st_size=0, ...}) = 0

fstat(10, {st_mode=S_IFREG|001, st_size=0, ...}) = 0

fstat(11, {st_mode=S_IFREG|001, st_size=0, ...}) = 0

...We see the timeout and we think it’s the DNS but we are still not sure with this little data so we try the same on our second production instance but the message appears to be another one.

-=>>strace -p 1236

...

epoll_wait(9, [{EPOLLIN, {u32=16, u64=12884901904}}], 64, 395) = 1

getpid() = 1338

epoll_wait(9, [{EPOLLIN, {u32=16, u64=12884901904}}], 64, 395) = 1

getpid() = 1338

...At this point we discard the DNS issue and we call in for additional support.

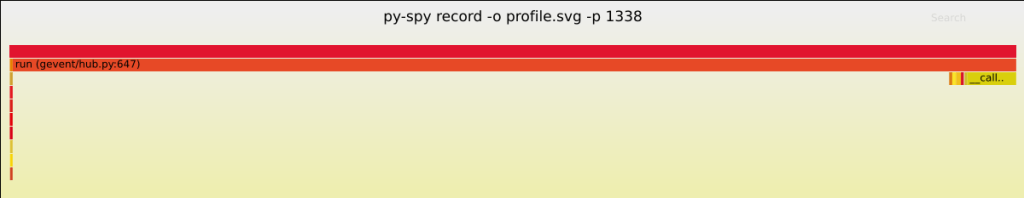

Py-spy: reporting for duty.

We run py-spy against the main gunicorn process and we are presented with the following output which incriminates gevent directly. We were clearly seeing the Gevent loop being blocked.

Gevent monkey-patches all Python libraries altering them to use asynchronous non-blocking IO. This is an alternative to threading where, instead of spawning threads, it runs a libev event loop and monkey patches Python to replace most blocking IO with non-blocking IO. This allows you to write Python in a synchronous style without the overhead of having to manage threads.

Gevent achieves concurrency using greenlets, which are a “lightweight pseudo-thread” that work cooperatively via an event loop to yield control to one another while they are waiting for IO.

Gevent and Gunicorn try their best to monkey patch blocking IO in the Python standard library, but they can’t control external C dependencies. This becomes a serious issue in web apps; if your event loop is blocked waiting for a C libraries’ IO, you can’t respond to any requests, even though you have plenty of system resources available.

Looking into the Gevent documentation, they even call this out explicitly:

“The greenlets all run in the same OS thread and are scheduled cooperatively. This means that until a particular greenlet gives up control, (by calling a blocking function that will switch to the Hub), other greenlets won’t get a chance to run. This is typically not an issue for an I/O bound app, but one should be aware of this when doing something CPU intensive, or when calling blocking I/O functions that bypass the libev event loop.”

The culprit

Luckily for us we already know our app is not doing any heavy IO lifting, in fact just user registration and storing a history of user requests. This gave us a single point of failure as who to blame.

We were currently using SQLAlchemy==1.4.29 which already has async support with asyncio although not production ready and can only be used with PostgreSQL at the moment, while we were just using a simple SQLite db.

Worker types

The gunicorn worker types can be categorized into 2 broad types: Sync and Async.

The default worker type is Sync and, as the name suggest, this worker can execute one request at a time. You can have multiple such workers, and the number of concurrent requests it would be capable of serving will be limited to that number of workers.

On the other hand, gunicorn offers also Async worker types such as gevent. Worker threads create greenlets which handle any new requests. When there is IO bound action the greenlet will yield and give a handle to another greenlet so that when many requests are coming to your app it can handle them concurrently, and therefore prevent any IO calls from blocking the CPU while improving throughput and reducing the amount of resources required to serve the operation.

Despite all this, we can identify some caveats to using gevent.

Gevent Caveats

- Using gevent needs all of your code to be cooperative in nature. You need to be able to ensure all DB drivers, clients, 3rd party libraries used are either pure python or don’t have any C dependencies for them to be monkey-patchable. You need to produce your code with gevent in mind and make sure all your code yields while doing IO operations for your greenlets in that worker not to be blocked.

- Using greenlets, the connections to your backend services will multiply and they need to be handled. You need to have connection pools that can be reused and at the same time ensure that your app and backend service can create and maintain that many socket connections.

- CPU blocking operations are going to stop all of your greenlets so if your requests have some CPU bound task it’s not going to help much. Of course, you can explicitly yield in between these tasks but you will need to ensure that part of code is greenlet safe.

- You will require to do all of these every time you/your team write a new piece of code.

Sync FTW

If your backend services are all in the same network then latency will be very low and you shouldn’t be worried about blocking IO. With ~4 gthread sync workers you can handle thousands of concurrent users easily with a proper application cache in place. In case you need more throughput you can have more threads per worker and the memory footprint per thread will be lower.

If you are having a large memory footprint per worker, maybe because you are loading a large model, try using preload=True, which would ensure that you are loading and then forking the workers.

If your app has multiple outbound calls to API’s outside your network, then you must safeguard your app by setting reasonable timeouts in your urllib/requests connection pool. Also, make sure that your application has reasonable worker timeouts which would ensure that one or more bad requests don’t block your other requests for a long time.

Even though we did not fixed the issue directly or made any required changes to our codebase, the solution for us was to simply change the worker type from gevent to gthread.

Take-away

- Stage==Production && Stage!=Development

- Beware of blocking IO in gevent applications

- When in doubt, choose sync gunicorn workers